Instagram has unveiled new safety features designed to protect users, in particular young people, from ‘sextortion’ and intimate image abuse.

The platform’s owner Meta, which also owns Facebook, WhatsApp and Threads, has confirmed it will begin testing a nudity filter in direct messages (DMs) on Instagram.

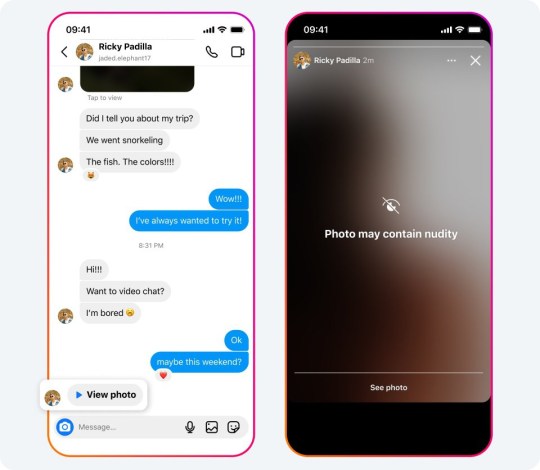

Called Nudity Protection, this feature will be on by default for those aged under 18 and will automatically blur images sent to users which are detected as containing nudity, better protecting users from seeing unwanted nudity in their DMs.

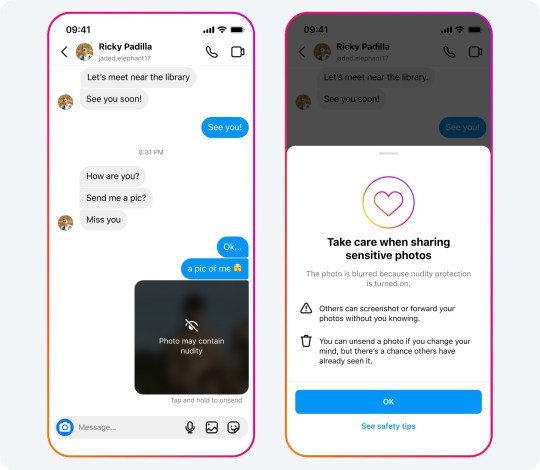

When receiving nude images, users will also see a message urging them not to feel pressure to respond, and an option to block the sender and report the chat.

With the filter turned on, people sending images containing nudity will also see a message reminding them to be cautious when sending sensitive photos, and be given the chance to unsend these photos.

The tool uses on-device machine learning to analyse whether an image contains nudity, meaning it will work inside end-to-end encrypted chats, and Meta said it will only see any images if a user chooses to report them to the company.

What is sextortion?

Sextortion occurs when images or videos are captured or sent during an online sexual exchange, and the victim is extorted and blackmailed for their intimate content.

‘Financial sextortion is a horrific crime,’ Meta said in a blog post on the updates.

‘We’ve spent years working closely with experts, including those experienced in fighting these crimes, to understand the tactics scammers use to find and extort victims online, so we can develop effective ways to help stop them.

‘We’re also testing new measures to support young people in recognising and protecting themselves from sextortion scams.’

Elsewhere, the social media giant said it was testing new detection technology to help identify accounts potentially engaging in sextortion scams and limit their ability to interact with everyone, but especially younger users.

Meta said message requests from these suspicious accounts would be routed straight to a user’s hidden requests folder.

For younger users, suspicious accounts will no longer see the ‘Message’ button on an teenager’s profile, even if they are already connected, and the firm was testing hiding younger users from these accounts in people’s follower lists to make them harder to find.

Jake Moore, global adviser at cybersecurity firm ESET, said: ‘With over a quarter of under-18s in the UK who have sent someone an intimate photo or video of themselves reported having had their intimate photos misused, either through the recipient sharing them publicly or threatening to post them online – it is vital action is taken.

‘However, many virtual content creators do not make their platforms with safeguards in mind from the design phase.

‘The new tool Instagram is testing to fight “sextortion”, could potentially offer protection to victims from this form of blackmail involving intimate images.’

Meta added that it was also testing new pop-up messages for people who may have interacted with such accounts – directing them to support and help if they need it.

In addition, the company said it was expanding its work with other platforms to share details about accounts and behaviours that violate child safety policies as part of the Lantern programme created last year.

‘This industry cooperation is critical, because predators don’t limit themselves to just one platform – and the same is true of sextortion scammers,’ Meta said.

MORE : TikTok to take on Instagram with new photo app

MORE : Inside the ‘terrifying’ world of AI influencers ready to take over social media

MORE : Historic moment as world’s first pig kidney transplant patient leaves hospital

Get your need-to-know

latest news, feel-good stories, analysis and more

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.